While the tool is mainly designed for students of computer vision, you can use it to create deepfake videos. If you are searching for a Windows program that lets you create funny deepfake videos, then DeepFaceLab might be your best pick.ĭeepFaceLab is a bit advanced as it uses machine learning and human image synthesis to replace faces in the videos. The animated version that the app delivers will have its face, eye, and mouth moving. MyHeritage is great for creating viral deepfake videos, and the animated items it makes look very realistic. The app will animate your photo in just a matter of seconds. MyHeritage has a dedicated app for Android and iOS, and all you have to do is upload an image and tap on the animate button. It’s a funny service you can use it from almost any device.

It’s an app mainly used by social media influencers to animate old photos. Bean, or your friend, whatever you can think of.Īnother deep fake service is MyHeritage. The character can be anything it can be a photo of Elon Musk, Mr. You can use any of these songs to make your character sing them.

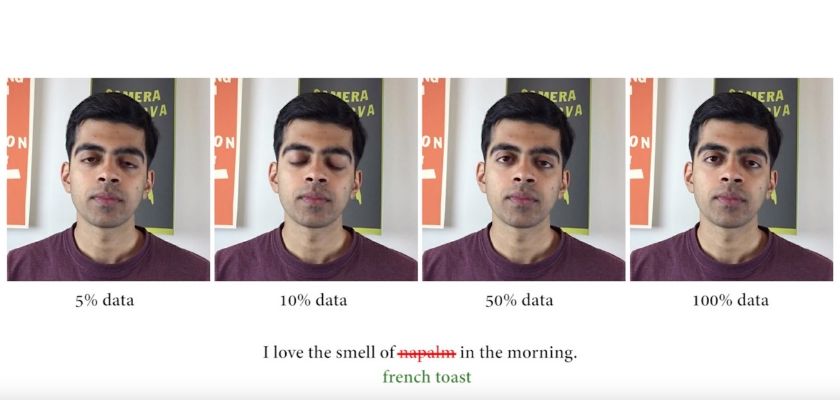

To get started, the app provides you with 15 songs. Wombo is a free app available for Android and iOS, and it’s a great way to create deep fake videos on Android and iPhone. Have you ever thought about how that is made? It’s made through the Wombo app. You may see celebrities or influencers hilariously singing songs. For example, I use this little video to welcome my students to class each term.ĭo you want to learn more about how it works? Aliaksandr posted a very good video explaining that here.If you stay active on social networking platforms, you might have seen people sharing lip-syncing photos. With a bit of post-processing, you can make your own short deepfake video. In our Google co-lab, all you need to do is upload a folder with the target image, upload your own video (source) to your google drive, and run the co-lab notebook block-by-block. To explain how deepfakes work, we created a simple Google co-lab for you that builds on the initial code from Aliaksandr Siarohin - who (no surprise here) - works for Snap. Since a video is a collection of images (frames), placed one after the other, this neural network allows us to photoshop each frame in a video. The computer automatically maps the right parts of one’s source images onto the destination image. The initial neural network is trained on many hours of real video footage of people to help the AI recognize various important features of the person’s face - such as the eyes, upper and lower lips, teeth, ears, eyebrows, an outline of the face, etc. The underlying AI detects facial expressions, eye movements, and head position - which are then superimposed on either a unicorn, a potato, or whatever Snap-Filter is en-vogue. Anyone who has used Snap will have used such an approach. See full article: Thanh Thi Nguyen First-Order Motion ModelĪ slightly different approach for deepfakes is to replace the encoder with a motion model. Next, the decoder will revert this information back into an image of a “2 “based on how the computer imagines it. After the encoding stage, the computer stores the information or latent image for a number this is just the information “this is a two”. Take a look at Dall-E 2 to see how powerful this process can be.īelow is the process of encoding and decoding an image of a “2”. Once the computer has encoded many similar images (like images from a cat etc.), it can reverse this process, and go from “cat” to an image. How? By using the knowledge stored in your brain. Our brain has created a connection between the image and the encoded-word “cat.” How do we know that a given object is a cat? Well, we have seen many cats. We see a cat, and we use the word (e.g., representation) “CAT” for it. Going from an original image to a latent image is called encoding. Or said differently, what you see in those layers (if you were to open up the Deep Neural Network Black Box) is no longer the reality that one can observe, but rather an inferred state based on the mathematical model of the AI layers before. Each layer represents a mathematical abstraction of the prior layer. Encoder-decoder pairsĭeep Learning models have different layers. Rather, they leverage either encoder-decoder pairs or first-order motion models. This is pretty cool! Today, though, most tools for deepfakes are not using GANs. This way, the two neural networks train each other and become more and more realistic. Essentially the second neural network is checking whether the image of the first network is real or fake. The second neural network - called the discriminative classifier - checks the first, generative neural network. Now you’re thinking: What about the other neural network set up by GAN? I’m glad you ask - this is where the “adversarial” component comes in.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed